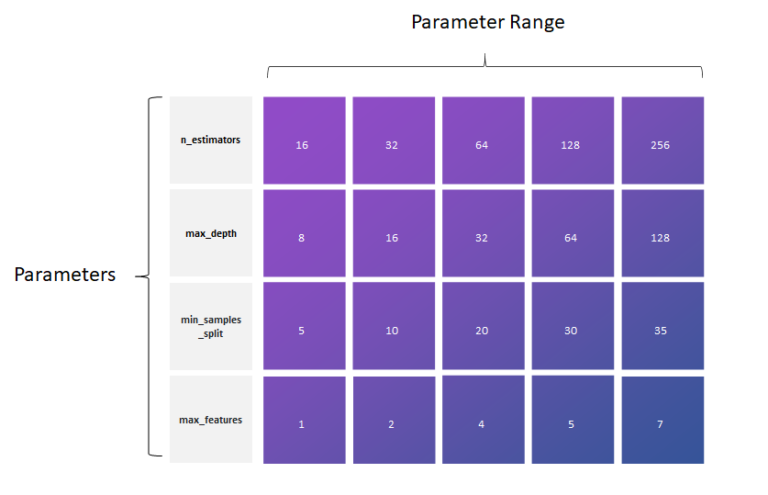

(The parameters of a random forest are the variables and thresholds used to split each node learned during training). In the case of a random forest, hyperparameters include the number of decision trees in the forest and the number of features considered by each tree when splitting a node. While model parameters are learned during training - such as the slope and intercept in a linear regression - hyperparameters must be set by the data scientist beforetraining. The best way to think about hyperparameters is like the settings of an algorithm that can be adjusted to optimize performance, just as we might turn the knobs of an AM radio to get a clear signal (or your parents might have!). A Brief Explanation of Hyperparameter Tuning Full code and data to follow along can be found on the project Github page. I have included Python code in this article where it is most instructive. Although this article builds on part one, it fully stands on its own, and we will cover many widely-applicable machine learning concepts. This post will focus on optimizing the random forest model in Python using Scikit-Learn tools. Gathering more data and feature engineering usually has the greatest payoff in terms of time invested versus improved performance, but when we have exhausted all data sources, it’s time to move on to model hyperparameter tuning. What are our options? As we saw in the first part of this series, our first step should be to gather more data and perform feature engineering. I enjoyed reading it.So we’ve built a random forest model to solve our machine learning problem (perhaps by following this end-to-end guide) but we’re not too impressed by the results. You can do similar tests for other parameters to get an intuition(or positive confirmation) about your parameter values.īreiman(2001) commented that the randomness used in the tree has to aim for low correlation while maintaining reasonable strength This is better unpacked in this paper that was linked by an answer above.

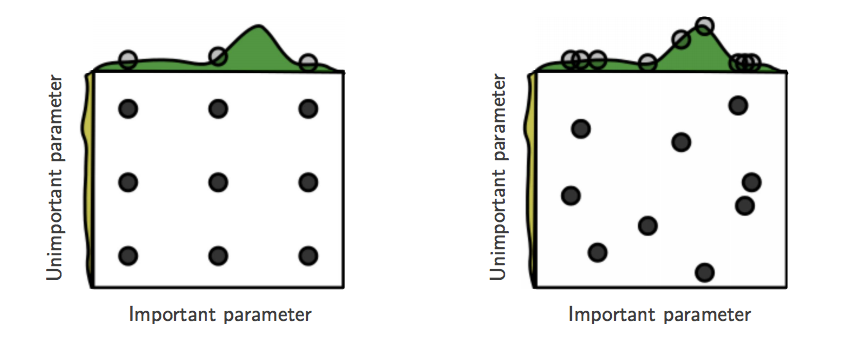

Mtry ~ sqrt(P) is the rule of thumb based on academic paper linked in some of the answers here. Noisy features will definitely not register any improvement in fit or somehow make it better after permutation (Word of Caution: Interactions between features may make this last step a bit dicey to use as a guiding tool to determine noise). just 1 column of the X) and comparing its fit before and after using a linear model against the Y. You can also determine noise features by randomly permuting the X's of a single feature(i.e. If there is a lot of noise features then lower mtry is not so useful. Maybe you will catch a lucky break and get a couple of features with really high importance and your model is simple. Just fit a linear model to get a sense of feature importance. Or do a lot of small other variables matter as well(lower mtry does better)? So you see this will effect the choice of your mtry. Next you should get a feel for your data:ĭo a small number of predictor variables have an outsized effect on response(higher mtry may do better in this case). The second idea is using a modern hyper param search algorithm like Bayesian Optimization scheme here I would suggest watching this video as well as some of the resources linked by answers in this thread. This is true of some other parameters as well. Why? You typically have larger jumps in accuracy(or what ever metric) when mtry goes from 1 to 10 than when it goes from 90 to 100 and so testing all the numbers between 90 and 100 would waste more time. When the parameter has a high range it is suggested using log scale. you don't want to split a node beyond this). nodesize is the parameter that determines the minimum number of nodes in your leaf nodes(i.e. mtry is the parameter in RF that determines the number of features you subsample from all of P before you determine the best split. Let P be the number of features in your data, X, and N be the total number of examples. You can evaluate your predictions by using the out-of-bag observations, that is much faster than cross-validation. If you just want to tune this two parameters, I would set ntree to 1000 and try out different values of max_depth. If you have more than one parameter you can also try out random search and not grid search, see here good arguments for random search: There are more parameters in random forest that can be tuned, see here for a discussion: Max depth is a parameter that most of the times should be set as high as possible, but possibly better performance can be achieved by setting it lower. See our paper for more information about this: There is no risk of overfitting in random forest with growing number of trees, as they are trained independently from each other. Number of trees is not a parameter that should be tuned, but just set large enough usually.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed